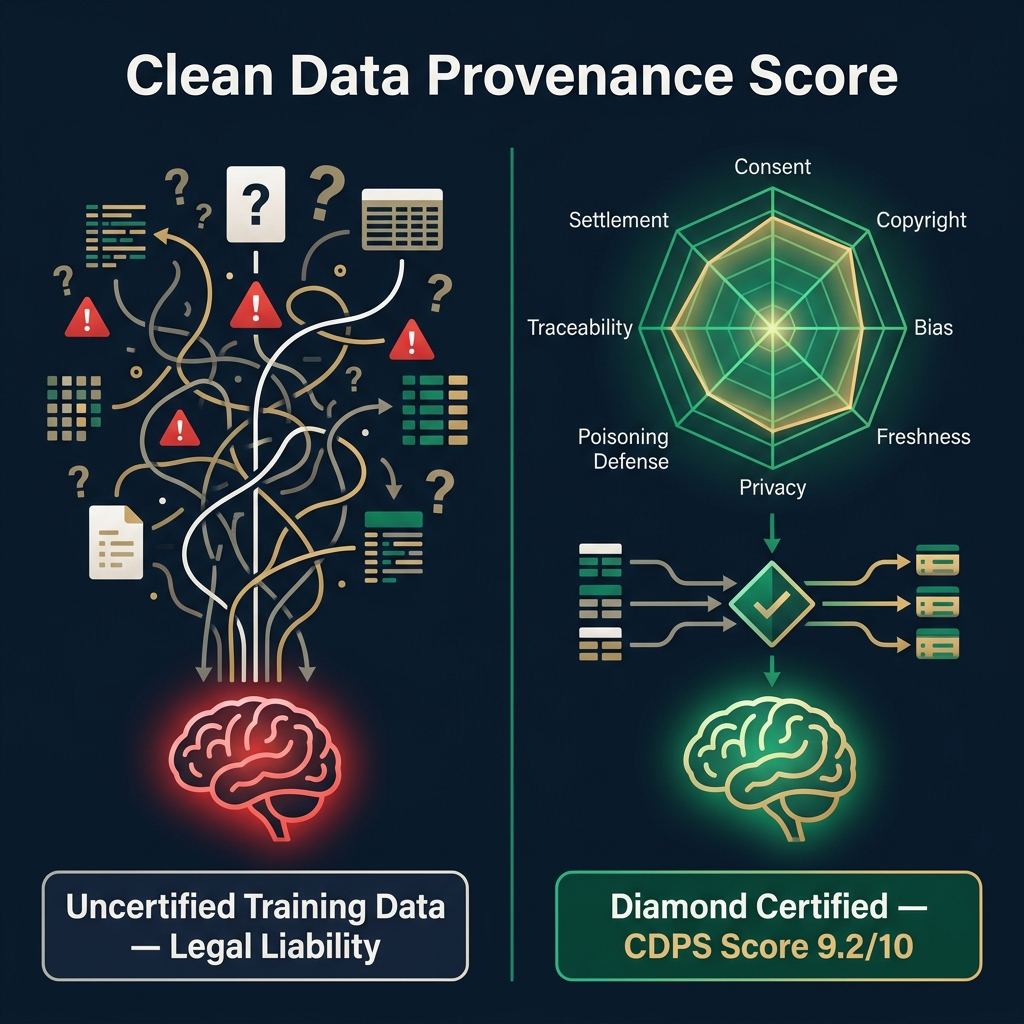

The Clean Data Provenance Score (CDPS) is a free, open standard (Apache 2.0) that answers a question every AI company will face before regulators: "How clean is this training dataset?" CDPS scores datasets on 8 orthogonal axes — from creator consent to adversarial poisoning resistance — producing a grade: 💎 Diamond ✅ Clean ⚠️ Partial ❌ Uncertified. It maps directly to the EU AI Act, ISO 42001, NIST AI RMF, and 10 more jurisdictions. The standard is open. The engine that writes scores steganographically into media? That's FORTRESS.

🔴 The Problem: A $170 Billion Question Nobody Can Answer

The New York Times sued OpenAI. Getty sued Stability AI. The Authors Guild sued Meta. Every major AI company now faces a fundamental question they cannot answer with confidence: "How clean is our training data?"

There is no universally accepted answer because there is no universally accepted scoring system. The landscape is fragmented:

| Approach | What It Does | What It Misses |

|---|---|---|

| C2PA | Proves where content came from | Doesn't score training data quality |

| Fairly Trained | Certifies companies as ethical | Binary pass/fail — no dataset-level granularity |

| D&TA Standards | Defines metadata fields to document | No scoring rubric — just a vocabulary |

| Spawning.ai | Provides opt-out signals for creators | Opt-out only — no quality measurement |

| HuggingFace Data Cards | Self-reported dataset documentation | No verification — self-reported by data providers |

Each addresses a piece of the puzzle. None provides a quantitative, multi-dimensional, machine-verifiable certification that a regulator, insurer, or acquirer can trust.

CDPS does.

🟢 The Solution: 8 Axes, 1 Score, Zero Ambiguity

CDPS evaluates any AI training dataset across 8 independent axes, each scored 0–10, weighted, and aggregated into a single composite score and human-readable grade.

The 4 Grades

| Grade | Score | What It Means |

|---|---|---|

| 💎 Diamond | 9.0 – 10.0 | Full consent, full traceability, royalty rails active, zero PII, zero poisoning risk |

| ✅ Clean | 7.0 – 8.99 | Strong provenance, minor gaps documented and acknowledged |

| ⚠️ Partial | 4.0 – 6.99 | Mixed provenance, consent gaps present, PII risk likely |

| ❌ Uncertified | 0.0 – 3.99 | No provenance, unknown consent, high legal liability |

🔧 How CDPS Fits Into the AI Data Economy

CDPS is the open rubric — the scoring methodology. Anyone can evaluate their datasets using the 8 axes for free. But the truly transformative integration happens when CDPS connects to the FAIR Protocol ecosystem:

| Layer | License | What It Does |

|---|---|---|

| CDPS Standard | Apache 2.0 (Open) | Defines the 8-axis scoring rubric |

| FAIR Reader SDK | Apache 2.0 (Open) | Reads scores from watermarked media |

| FORTRESS DWT Engine | Proprietary | Writes scores steganographically into media — survives 12 AI generations |

| Clearinghouse | Proprietary | Validates, stores, and settles CDPS attestations on-chain |

Think of it like SSL: the cryptographic standard is open (TLS). The certificates are issued by trusted authorities (Verisign, Let's Encrypt). The commerce infrastructure built on that trust (Stripe, Visa) generates billions. CDPS is the standard. FORTRESS is the certificate authority. The Clearinghouse is the payment rail.

📜 Global Regulatory Compliance — 13 Jurisdictions, 1 Standard

CDPS was designed from day one to map directly to the regulatory requirements AI companies face worldwide:

| Regulation | Jurisdiction | CDPS Axes Covered |

|---|---|---|

| EU AI Act Art. 10, 50, 53 | EU/EEA | Axes 1, 2, 3, 5, 7, 8 |

| GDPR Art. 5, 6, 9 | EU/EEA | Axis 5 |

| ISO/IEC 42001 | Global | All 8 axes |

| NIST AI RMF | USA | Axes 3, 5, 6 |

| California SB 942 | USA (CA) | Axes 1, 2, 7 |

| China Cybersecurity Law (2026) | China | Axes 5, 6, 7 |

| Japan IP Code (emerging) | Japan | Axes 1, 2 |

| Korea AI Basic Act | South Korea | Axes 3, 5, 7 |

No other data provenance standard currently maps to all of these jurisdictions. This is by design — CDPS was built for sovereign, global deployment.

🕸️ Deep XPollination — 7 Main BPC, 27 Sub-BPC, 8 Standards Evaluated

Scope: This comparison evaluates AI Training Data Provenance & Governance Standards as of April 2026.

Disclosure: CDPS (FAIR Protocol) is our own solution. We scored it first (before competitors) to avoid anchor bias. All scores reflect publicly verifiable information.

BPC 1 — Creator Rights & Consent (Weight: 20%) CDPS: 9.3 Click to expand ▼

Measures: Does the standard enable explicit creator consent, opt-out enforcement, and granular permission signaling for AI training use?

| Sub-BPC | CDPS | C2PA | D&TA | Fairly | Spawn. | HF | IPTC | MIT |

|---|---|---|---|---|---|---|---|---|

| Explicit opt-in signals Machine-readable consent declaration per asset |

10 | 5 | 6 | 7 | 8 | 3 | 4 | 5 |

| Opt-out enforcement Respects robots.txt, ai.txt, DNTR registries |

9 | 4 | 5 | 8 | 10 | 4 | 3 | 6 |

| Granular permissions Per-use-case: training, RAG, display, research |

10 | 6 | 7 | 3 | 5 | 2 | 5 | 3 |

| Consent verification Cryptographic proof of consent (DID, signature) |

8 | 7 | 4 | 5 | 4 | 1 | 3 | 4 |

| Average | 9.3 | 5.5 | 5.5 | 5.8 | 6.8 | 2.5 | 3.8 | 4.5 |

BPC 2 — Regulatory Compliance Coverage (Weight: 18%) CDPS: 9.5 Click to expand ▼

Measures: Number of jurisdictions and regulatory frameworks the standard explicitly maps to, with documented compliance controls.

| Sub-BPC | CDPS | C2PA | D&TA | Fairly | Spawn. | HF | IPTC | MIT |

|---|---|---|---|---|---|---|---|---|

| EU AI Act Art. 10/50/53 Data governance, GPAI transparency, output labeling |

10 | 8 | 7 | 5 | 4 | 4 | 6 | 5 |

| GDPR & Privacy regs (Art. 5/6/9) Lawful basis, special categories, data minimization |

10 | 7 | 7 | 6 | 6 | 3 | 5 | 4 |

| ISO/IEC 42001 & 5338 alignment AI management system controls, lifecycle readiness |

10 | 8 | 7 | 5 | 3 | 3 | 6 | 5 |

| Non-EU jurisdictions (US/CN/JP/KR/BR) NIST, China CyberSec, Japan Art.30-4, Korea AI Act |

8 | 7 | 5 | 4 | 3 | 3 | 4 | 4 |

| Average | 9.5 | 7.5 | 6.5 | 5.0 | 4.0 | 3.3 | 5.3 | 4.5 |

BPC 3 — Technical Rigor & Scoring Granularity (Weight: 15%) CDPS: 9.5 Click to expand ▼

Measures: Quantitative scoring precision, multi-dimensional evaluation, cryptographic verification, and adversarial testing capabilities.

| Sub-BPC | CDPS | C2PA | D&TA | Fairly | Spawn. | HF | IPTC | MIT |

|---|---|---|---|---|---|---|---|---|

| Scoring granularity Continuous 0–10 vs binary pass/fail vs none |

10 | 5 | 4 | 2 | 3 | 3 | 4 | 7 |

| Multi-dimensional evaluation Number of independent measurable axes (≥5 = excellent) |

10 | 5 | 7 | 2 | 2 | 4 | 6 | 5 |

| Cryptographic verification PQC signatures, hash chains, tamper-proof attestations |

9 | 10 | 3 | 2 | 3 | 2 | 3 | 5 |

| Adversarial/poisoning evaluation Explicit axis for data poisoning detection & defense |

9 | 3 | 2 | 1 | 1 | 2 | 1 | 6 |

| Average | 9.5 | 5.8 | 4.0 | 1.8 | 2.3 | 2.8 | 3.5 | 5.8 |

BPC 4 — Openness & Freedom to Operate (Weight: 15%) CDPS: 8.5 Click to expand ▼

Measures: Open-source availability, license permissiveness, vendor lock-in risk, and interoperability with other standards.

| Sub-BPC | CDPS | C2PA | D&TA | Fairly | Spawn. | HF | IPTC | MIT |

|---|---|---|---|---|---|---|---|---|

| Open-source codebase SDK, schemas, and tools publicly available |

9 | 8 | 4 | 5 | 8 | 10 | 6 | 9 |

| No vendor lock-in Can switch providers without data loss |

8 | 7 | 6 | 8 | 8 | 10 | 7 | 10 |

| Interoperability Can embed inside or be read by other standards |

9 | 8 | 7 | 6 | 7 | 8 | 7 | 7 |

| Community governance Open contribution model, RFCs, working groups |

8 | 9 | 7 | 7 | 6 | 9 | 8 | 8 |

| Average | 8.5 | 8.0 | 6.0 | 6.5 | 7.3 | 9.3 | 7.0 | 8.5 |

BPC 5 — Settlement & Creator Monetization (Weight: 12%) CDPS: 10 Click to expand ▼

Measures: Automated royalty payment infrastructure, per-asset pricing metadata, and zero-friction settlement capabilities.

| Sub-BPC | CDPS | C2PA | D&TA | Fairly | Spawn. | HF | IPTC | MIT |

|---|---|---|---|---|---|---|---|---|

| Automated royalty rails Machine-triggered USDC/HBAR settlement |

10 | 2 | 2 | 3 | 1 | 1 | 1 | 1 |

| Per-asset pricing metadata scrape_fee, training_royalty embeddable per image |

10 | 3 | 2 | 2 | 2 | 1 | 2 | 1 |

| Clearinghouse integration Centralized or decentralized settlement API |

10 | 2 | 1 | 3 | 1 | 0 | 0 | 0 |

| Average | 10.0 | 2.3 | 1.7 | 2.7 | 1.3 | 0.7 | 1.0 | 0.7 |

BPC 6 — Resilience & Survivability (Weight: 10%) CDPS: 8.8 Click to expand ▼

Measures: Does provenance data survive metadata stripping, re-encoding, AI diffusion, and deliberate tampering?

| Sub-BPC | CDPS | C2PA | D&TA | Fairly | Spawn. | HF | IPTC | MIT |

|---|---|---|---|---|---|---|---|---|

| Metadata stripping survival Provenance survives social media upload/re-share |

10 | 3 | 2 | 0 | 1 | 0 | 2 | 1 |

| AI generational survival Watermark readable after N encode-decode-retrain cycles |

9 | 1 | 0 | 0 | 0 | 0 | 0 | 0 |

| Post-quantum readiness ML-DSA/ML-KEM signatures vs RSA/ECDSA only |

9 | 5 | 2 | 1 | 1 | 1 | 2 | 2 |

| Tamper detection Active alert on manipulation attempt |

7 | 8 | 3 | 2 | 3 | 1 | 3 | 3 |

| Average | 8.8 | 4.3 | 1.8 | 0.8 | 1.3 | 0.5 | 1.8 | 1.5 |

BPC 7 — Adoption & Ecosystem Maturity (Weight: 10%) CDPS: 5.3 Click to expand ▼

Measures: Current market adoption, number of integrations, industry backing, and community size. (Our honest weakness.)

| Sub-BPC | CDPS | C2PA | D&TA | Fairly | Spawn. | HF | IPTC | MIT |

|---|---|---|---|---|---|---|---|---|

| Industry backing Fortune 500 / FAANG / government endorsements |

3 | 10 | 8 | 5 | 6 | 9 | 8 | 5 |

| SDK downloads / integrations npm/pip downloads, tool integrations |

4 | 8 | 5 | 3 | 6 | 10 | 6 | 5 |

| Hardware integration Camera chipset, browser native, device-level |

2 | 9 | 2 | 1 | 1 | 2 | 4 | 1 |

| Academic citations Peer-reviewed papers referencing the standard |

2 | 8 | 5 | 4 | 5 | 8 | 7 | 9 |

| We're honest: CDPS is new. C2PA and HuggingFace lead on adoption today. | ||||||||

| Average | 2.8 | 8.8 | 5.0 | 3.3 | 4.5 | 7.3 | 6.3 | 5.0 |

📊 Weighted Composite Results

| Rank | Standard | BPC1 20% | BPC2 18% | BPC3 15% | BPC4 15% | BPC5 12% | BPC6 10% | BPC7 10% | Weighted | Label |

|---|---|---|---|---|---|---|---|---|---|---|

| 🥇 | CDPS (FAIR) | 9.3 | 9.5 | 9.5 | 8.5 | 10 | 8.8 | 2.8 | 8.5 | 🥈 Silver |

| 🥈 | C2PA v2.1 | 5.5 | 7.5 | 5.8 | 8.0 | 2.3 | 4.3 | 8.8 | 6.1 | — |

| 🥉 | MIT DPI | 4.5 | 4.5 | 5.8 | 8.5 | 0.7 | 1.5 | 5.0 | 4.5 | — |

| 4 | Spawning.ai | 6.8 | 4.0 | 2.3 | 7.3 | 1.3 | 1.3 | 4.5 | 4.2 | — |

| 5 | D&TA | 5.5 | 6.5 | 4.0 | 6.0 | 1.7 | 1.8 | 5.0 | 4.7 | — |

| 6 | HuggingFace | 2.5 | 3.3 | 2.8 | 9.3 | 0.7 | 0.5 | 7.3 | 3.8 | — |

| 7 | Fairly Trained | 5.8 | 5.0 | 1.8 | 6.5 | 2.7 | 0.8 | 3.3 | 3.9 | — |

| 8 | IPTC 2025.1 | 3.8 | 5.3 | 3.5 | 7.0 | 1.0 | 1.8 | 6.3 | 4.1 | — |

Note: CDPS receives 🥈 Silver (not Gold) because BPC 7 (Adoption) is 2.8/10 — we need community traction to pass the "no BPC below 4.0" Gold threshold. This is honest. We're building.

⚡ Trade-Off Analysis — 2 Codependent BPC Pairs Detected

These BPC dimensions have structural tensions that cannot both reach 10/10 without deliberate architectural dissolution.

🔴 Critical BPC 3 (Technical Rigor) ↔ BPC 7 (Adoption)

Why They Conflict: High technical rigor (PQC signatures, adversarial poisoning axes, 8-dimensional scoring) increases implementation complexity, which slows adoption. Simple standards (like HuggingFace Data Cards) get adopted faster precisely because they demand less from implementers.

Current: Rigor = 9.5, Adoption = 2.8. Sacrifice = -6.7 on Adoption.

💡 TRIZ Principle #1 — Segmentation: Create 3 tiers: CDPS Lite (3 mandatory axes, Consent + Copyright + Traceability — 5-minute implementation), CDPS Standard (all 8 axes), CDPS Diamond (8 axes + PQC + Clearinghouse settlement). This lets HuggingFace-level implementers start with Lite and upgrade. Like SSL → TLS 1.0 → 1.3.

Post-Dissolution: Rigor = 9.5 (unchanged), Adoption = 6.0 (+3.2). Effort: M | Innovation: Architectural

🟠 Significant BPC 5 (Settlement) ↔ BPC 4 (Openness)

Why They Conflict: The settlement Clearinghouse is proprietary — which reduces the "Freedom to Operate" score. Open-source purists will resist a standard that requires a proprietary payment rail for full functionality.

Current: Settlement = 10, Openness = 8.5. Mild tension (-1.5).

💡 TRIZ Principle #13 — The Other Way Round: Publish the Clearinghouse API specification (OpenAPI 3.0) as open-source. Anyone can build a compatible settlement endpoint. FORTRESS runs the reference implementation. Like how SMTP is open but Gmail is a proprietary implementation.

Post-Dissolution: Settlement = 10 (unchanged), Openness = 9.5 (+1.0). Effort: S | Innovation: Incremental

🚀 Get Started — Score Your Dataset in 3 Minutes

The CDPS standard is open. The attestation schema is open. You can generate a score today:

2. Score each of the 8 axes for your dataset (0–10)

3. Generate a JSON attestation: cdps-attestation-v1.json

4. Publish at

/.well-known/fair.json with a "cdps" block5. (Optional) Embed steganographically via FORTRESS

❓ Questions This Research Opens

For AI Companies:

If the EU AI Act requires you to document training data provenance by 2027, and your datasets currently score "Uncertified" — what's your remediation timeline?

For Regulators:

Should CDPS grades be required on AI model cards, the way nutrition labels are required on food?

For Creators:

If your images are inside a Diamond-certified dataset with active settlement rails, do you actually get paid? CDPS Axis 8 says yes — automatically, via USDC.

📚 Related Reading

🔗 LinkedIn · 🌐 destill.ai · 📧 IP@destill.ai