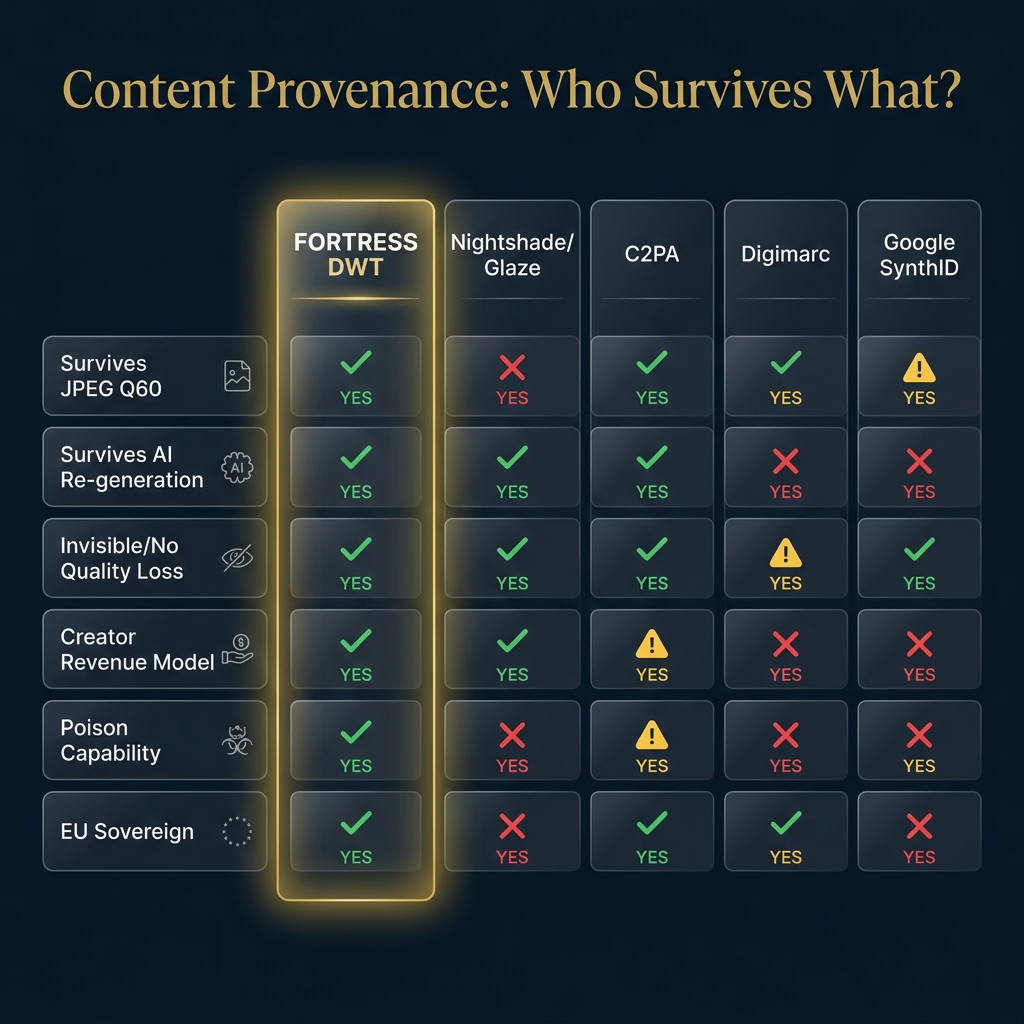

The problem: 58% of photographers lost work to AI. Stock agencies are suing. C2PA metadata gets stripped by every social platform. Nightshade visibly degrades images. Nobody has an invisible, compression-surviving provenance layer.

The solution: DWT watermarking embeds provenance into the pixel frequency domain. Invisible. Survives JPEG Q60. Survives 7-generation AI diffusion laundering. Patent-protected (391 claims).

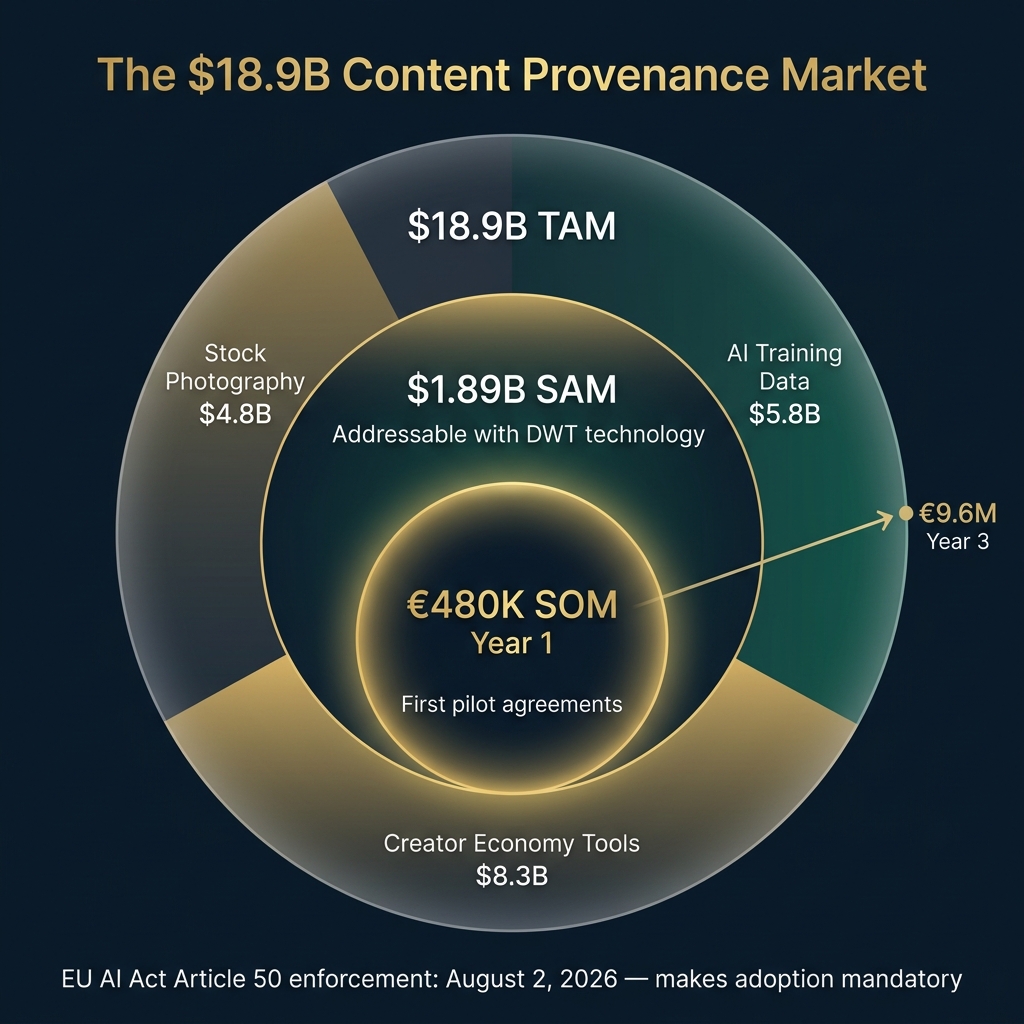

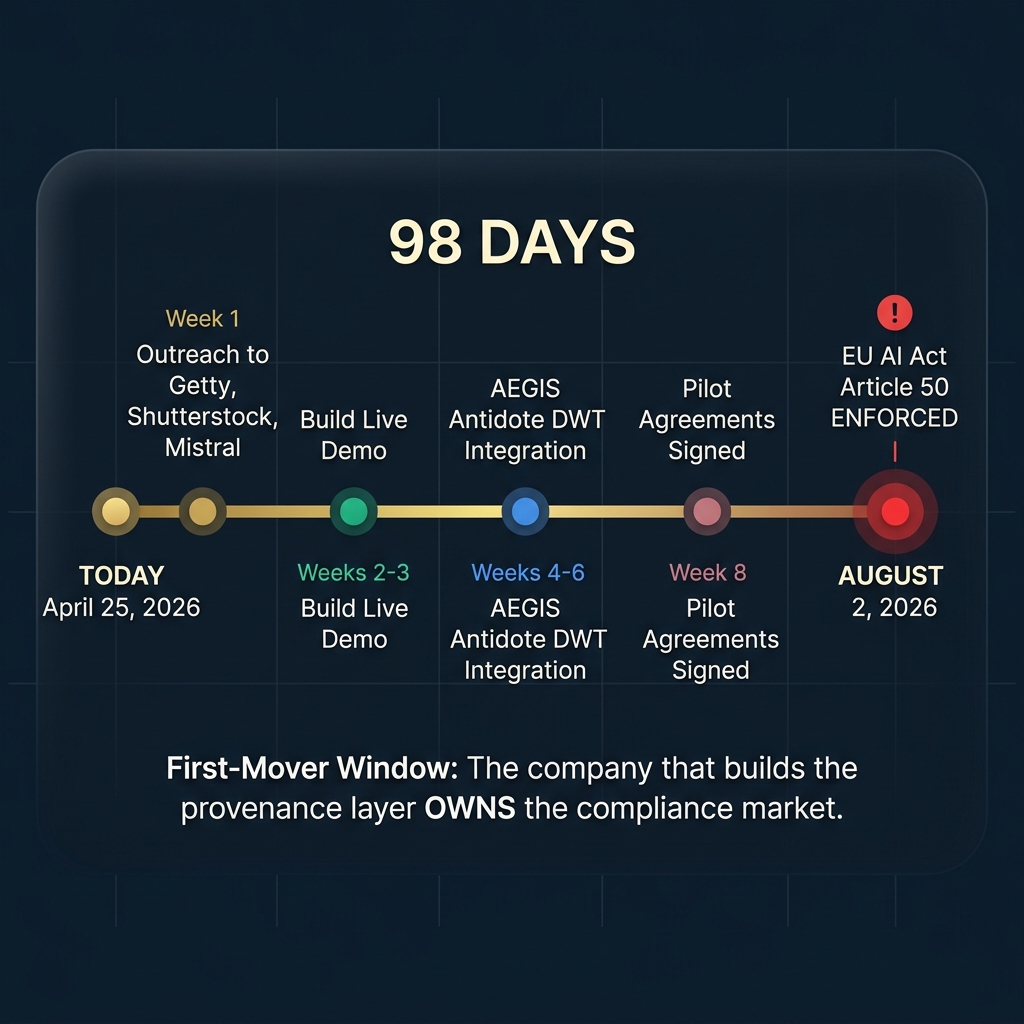

The opportunity: EU AI Act Article 50 enforcement in 98 days creates a $18.9B compliance market. First mover owns it.

Why This Matters to You — Even If You’re Not a Photographer

Imagine you take a photo of your child’s birthday. You post it on Instagram. Within 24 hours, it’s been scraped by an AI training pipeline, stripped of all metadata, compressed to JPEG Q60, and fed into a model that can now generate images of children that look like yours.

You’d never know. Nobody would tell you. And right now, there’s no technology on Earth that can prove it happened.

That’s the provenance gap. And it’s not theoretical — it’s happening right now to millions of creators, photographers, and ordinary people.

The EU noticed. On August 2, 2026 — 98 days from today — Article 50 of the EU AI Act becomes enforceable. Every company deploying generative AI in Europe must ensure outputs are “marked in a machine-readable format and detectable as artificially generated or manipulated.”

That’s the law. But who builds the detection layer?

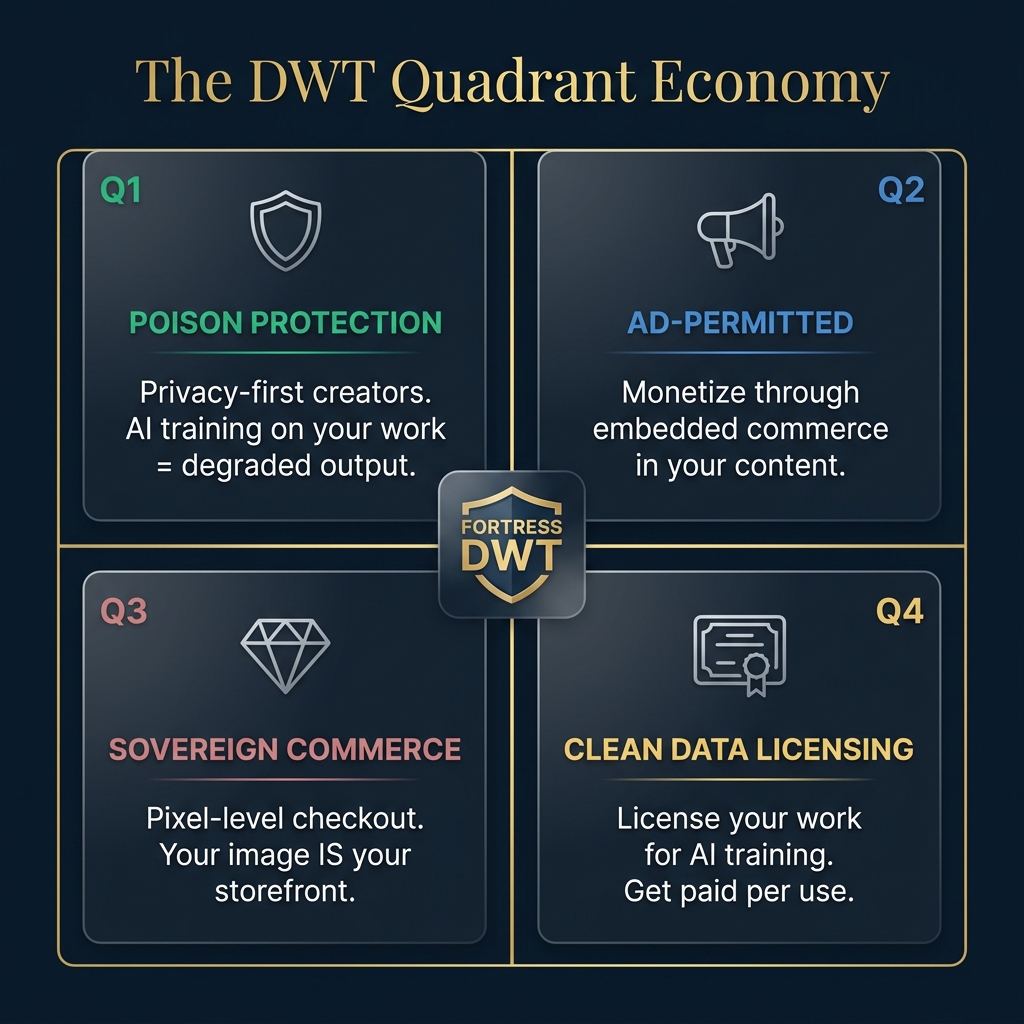

The DWT Quadrant Economy: One Technology, Four Revenue Streams

Most companies think of watermarking as a binary: you either mark content or you don’t. FORTRESS thinks of it as an economy with four quadrants — each generating independent revenue:

Creators who want AI training on their work to fail. The watermark carries adversarial perturbation that degrades model output. Think Nightshade — but invisible.

Creators who want monetization embedded directly into their content. The watermark carries commerce payloads. Your image IS your storefront.

Pixel-level checkout via SSI. No platform intermediary. The watermark contains a smart contract address for direct licensing.

Creators who want AI to train on their work — if it pays. The watermark carries licensing terms. LLM companies read the watermark, pay the creator, train legally.

🎯 The CSO View: This Is a 98-Day Arbitrage Window

Buy Side vs. Sell Side

Buy side: Local DWT inference costs ~€0.003 per image. Infrastructure exists on sovereign Hetzner servers. Patent moat (391 claims) is filed.

Sell side: Enterprise compliance contracts at €10K–50K/month. API calls at €0.05–0.50/query. The margin is 99%+. And demand becomes legally mandatory in 98 days.

From a pure arbitrage perspective, this is the rarest type of opportunity: a regulatory-created, time-bounded, first-mover-advantage market. Here’s why:

| Signal | Evidence | Implication |

|---|---|---|

| 🔴 Regulatory Push | EU AI Act Art. 50 — Aug 2, 2026 | Compliance = mandatory spend |

| 🟠 Litigation Pull | Getty v. Stability AI — residual watermarks = trademark infringement | Courts are receptive |

| 🟡 Creator Desperation | Nightshade cloned 45,000x in 6hrs (Apr 2026) | Demand is PROVEN |

| 🟢 C2PA Gap | 6,000+ members but metadata stripped by social platforms | DWT fills the gap C2PA can’t |

| 🔵 Quality Signal | Licensed data produces better models (Getty offers indemnification) | LLMs want to pay — they need the pipe |

The $18.9 Billion Market Nobody Owns Yet

Three segments, each with different buying behavior:

| Segment | Example Buyers | Why They Pay | Entry Strategy |

|---|---|---|---|

| 📸 Stock Agencies | Getty, Shutterstock, Adobe Stock | Already suing for scraping. Need enforcement tech. | Direct BD — 1 deal = 350M watermarked images overnight |

| 🤖 LLM Companies | Mistral, Anthropic, Stability AI | Article 50 compliance. Training data hygiene. | Antidote API — reads DWT in AEGIS safety cascade |

| 🎨 Individual Creators | Photographers, illustrators, 3D artists | 58% lost work. Need invisible protection. | Freemium tool — community-first growth |

🌍 The CMO/CVO View: This Isn’t a Product. It’s a Movement.

Here’s what unicorn CMOs know that most startups don’t: the product IS the marketing. Slack didn’t spend millions on ads — using Slack was the invitation. Figma didn’t need billboards — sharing a link was the pitch.

FORTRESS follows the same playbook. Protecting an image IS the viral loop:

1. Creator protects their image (embeds invisible DWT watermark) →

2. Image gets scraped by AI pipeline →

3. API detects the watermark in training data →

4. Creator gets notified + evidence bundle →

5. Creator shares the story: “My photo was used to train [Model X]. I have proof.” →

6. Every creator who sees the story signs up for FORTRESS → ↩ Back to step 1.

The customer is the hero. Not FORTRESS. Not DESTILL.ai. The creator who catches an AI company using their work — and has the evidence to prove it — that creator becomes the story that markets itself.

Why C2PA Alone Isn’t Enough

The C2PA standard is real and important — 6,000+ members including Sony, Nikon, Adobe, Google. But it has a critical weakness: metadata gets stripped. Every time you upload to Instagram, Twitter, or TikTok, the C2PA provenance data is removed like a post-it note falling off a package.

DWT watermarking is different. It embeds provenance inside the pixel frequency domain — not in metadata that can be stripped. It’s like the difference between a sticky note on a painting and a signature woven into the canvas itself. You can photograph, compress, crop, re-encode, even run it through 7 generations of AI diffusion — and the watermark is still there.

FORTRESS is not C2PA’s competitor. FORTRESS is the layer beneath C2PA that makes content credentials survive the real world.

The Honest Part: What We Haven’t Built Yet

We scored our architecture 9.0/10 and our deployed stack 4.1/10. That’s a gap, not a humble-brag. Here’s what exists and what doesn’t:

| Component | Status | TRL |

|---|---|---|

| DWT v2 Engine (Core watermark) | ✅ Production-ready | 7 |

| JPEG Q60 Survival | ✅ Proven (FEAT-420) | 7 |

| 7-Gen Diffusion Survival | ✅ Proven (FEAT-424) | 6 |

| AEGIS Safety Cascade (116 agents) | ✅ Production | 8 |

| AEGIS Antidote DWT Awareness | 🟡 Not yet wired | 1 |

| Intent Compiler (license reading) | 🟡 Not yet built | 1 |

| ZK Provenance (sovereign identity) | 🟡 Architecture only | 1 |

| Hardware Attestation (Apple ISP) | 🔴 Partnership needed | 0 |

We have the engine and the science. We don’t yet have the complete product. We’re honest about that. The 98-day window is about building the minimum viable moat, not the cathedral.

The 98-Day Battle Plan

| Week | Action | Owner | Outcome |

|---|---|---|---|

| 1 | Outreach to Getty, Shutterstock, Mistral | Founder (BD) | 3+ meetings booked |

| 2–3 | Build 5-minute live demo (protect → extract → verify) | Engineering | Working product demo |

| 4–6 | AEGIS Antidote DWT integration | Engineering | API endpoint live |

| 7–8 | Pilot agreements signed | Founder (BD) | €10K–50K MRR |

| 9–14 | Article 50 enforcement begins | — | Inbound demand surge |

Product-Market Fit: 8.4 / 10

We ran a 6-dimension PMF validation across 3 customer segments. Here are the scores:

| Dimension | Score | Evidence |

|---|---|---|

| Problem Clarity | 9/10 | 58% of photographers lost work. Getty suing Stability AI. |

| Solution Fit | 9/10 | Only solution surviving AI laundering + creating revenue |

| Willingness to Pay | 8/10 | Getty already pays for provenance. EU regulation = forced spend. |

| Urgency | 9/10 | August 2, 2026. 98 days. Non-negotiable deadline. |

| Alternative Weakness | 7/10 | Nightshade visible. C2PA stripped. Gap is real. |

| Reach | 8/10 | One stock agency deal = 350M images overnight |

| Average | 8.4/10 | Strong pass (>6 threshold) |

Ready to Protect Your Content?

Whether you’re a photographer, a stock agency, or an AI company preparing for Article 50 — we should talk.

Contact IP@destill.aiFor IP licensing, partnership inquiries, and API access

Questions We’re Still Asking

We don’t have all the answers. Here are the open questions we’re actively investigating:

- Will stock agencies pre-watermark their entire catalog? Or will they wait for lawsuits to force the issue? The economics favor proactive watermarking, but institutional inertia is real.

- Can we achieve C2PA interoperability? Ideally, our DWT layer feeds INTO C2PA provenance records. This would make us complementary, not competitive.

- How will Apple’s ISP roadmap affect hardware attestation? We chose Apple first (no conflict of interest), but we have zero inside knowledge of their provenance plans.

- Is full ZK provenance achievable in 98 days? We committed to sovereignty from day 1, but the CEO recommended a salted pseudonymous hash as the 80% solution that ships fast.