⚡ Executive Summary

The EU AI Act, GDPR, and DSA represent the world's most aggressive effort to establish digital trust. But they share a critical failing point: technical enforceability. What if the very mandate designed to protect citizens from AI deception fails immediately because social media apps unintentionally erase the required watermarks within milliseconds of upload?

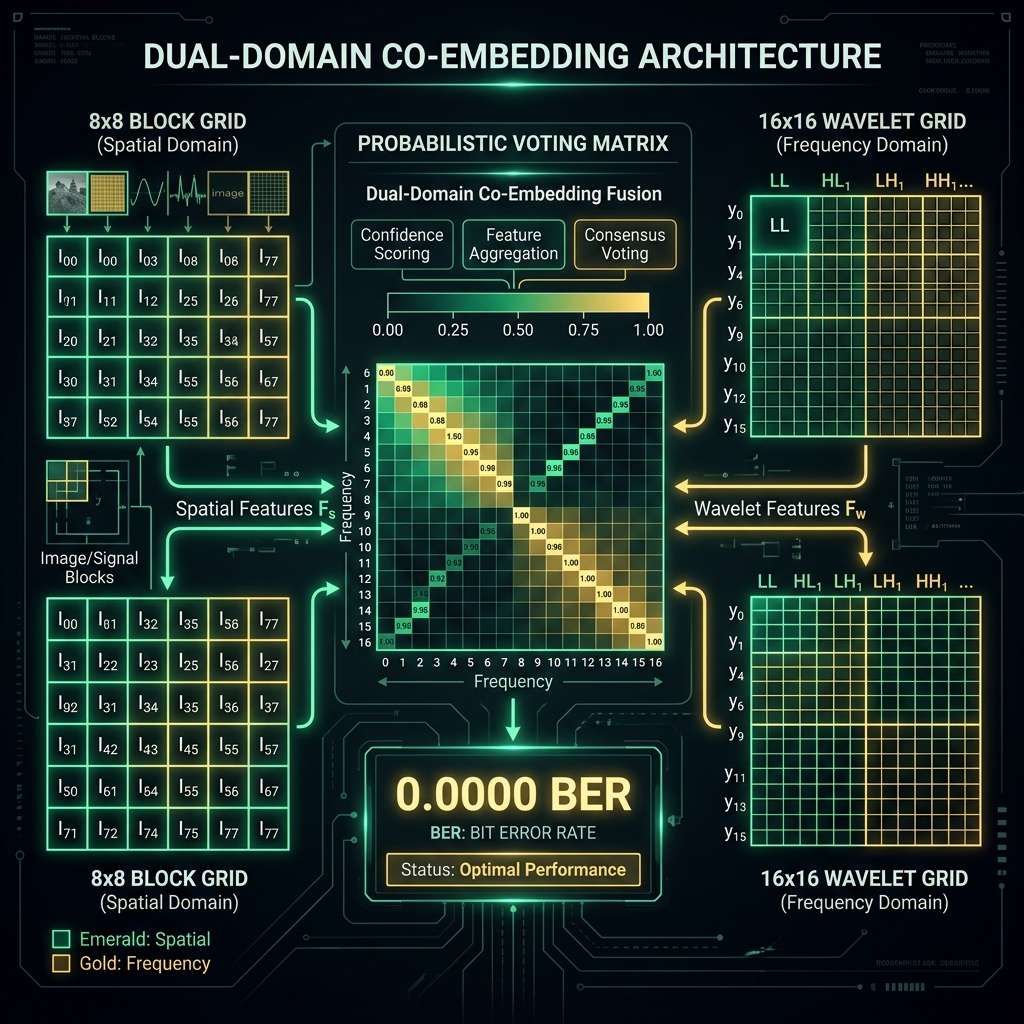

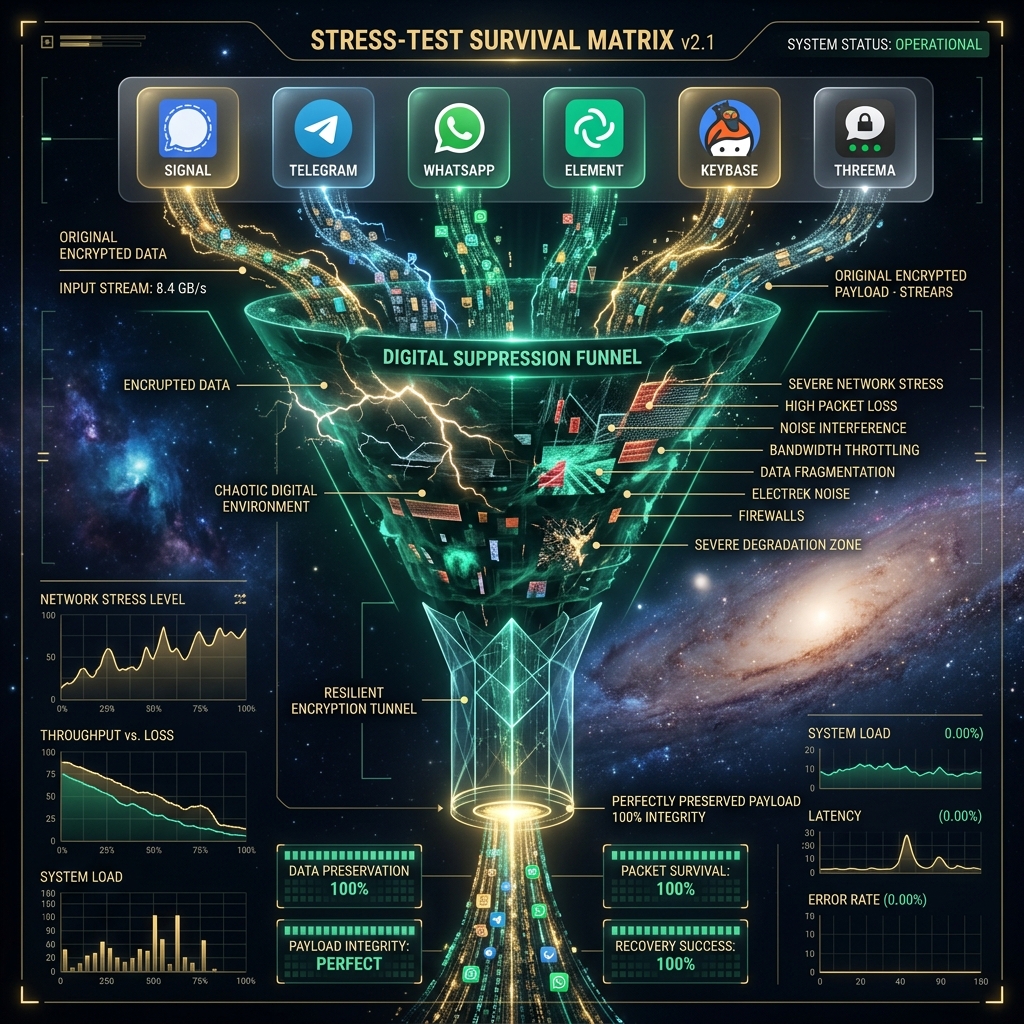

This week, DESTILL.ai achieved a flawless 0.0000 BER using a structural breakthrough called Dual-Domain Co-Embedding (FEAT-422). We did not just build a better tracker; we successfully decoupled digital provenance from pixel fragility, ensuring survival across destructive compression like Instagram Story crops, Telegram downsampling, and WhatsApp transmission.

🔴 The Pain: The "Instagram Story" Erasure

Once upon a time, the European Parliament passed sweeping legislation demanding that all synthetic media be reliably marked and traceable to its generator (Article 50). Every day, researchers built spatial watermarks that achieved near 100% extraction rates in sterile university laboratories, confidently claiming legislative compliance to regulators.

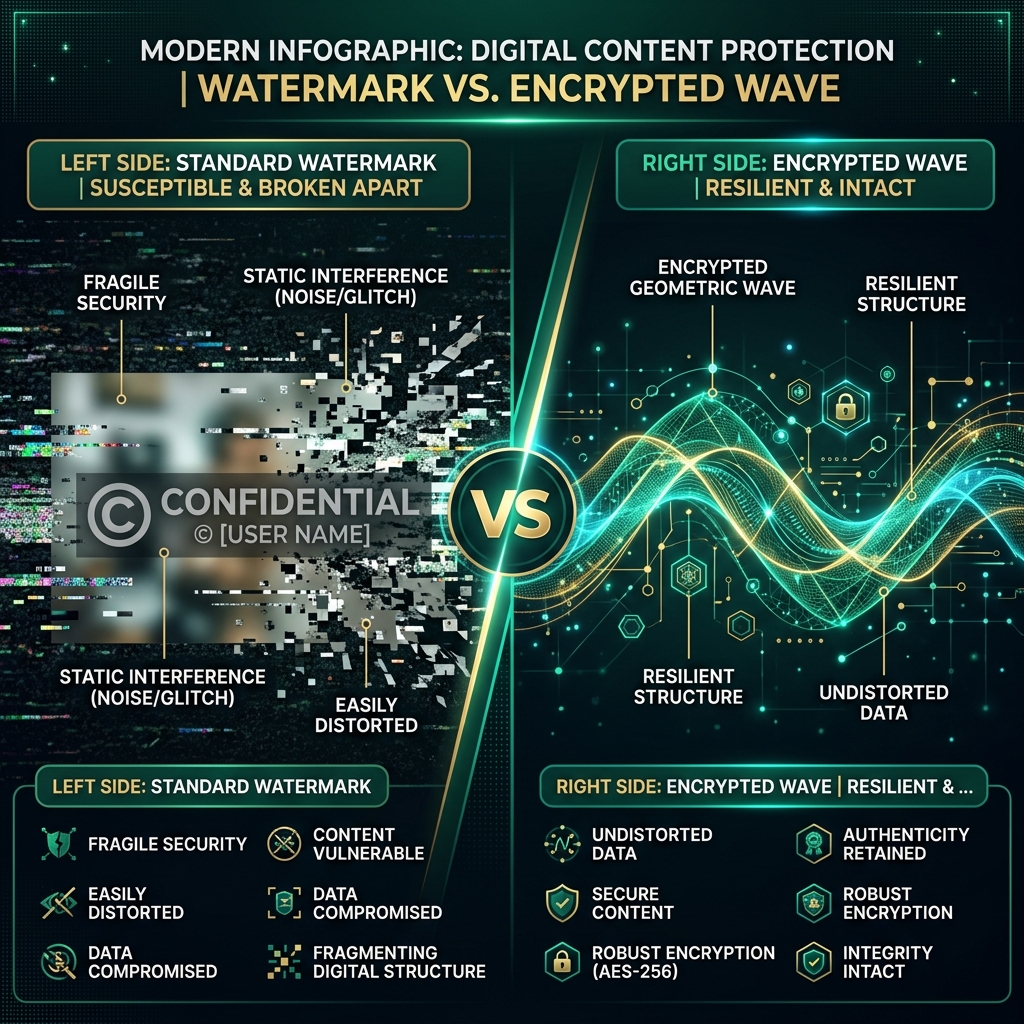

One day, we applied a standard 6% asymmetric vertical crop combined with an aspect-ratio preserving Lanczos resize—mathematically identical to what happens when you upload an image to an Instagram Story. Because of that, the standard single-domain watermarks experienced catastrophic phase-loss. The synchronization grids misaligned, the integer block boundaries shifted, and the embedded signal vanished into pure digital static. A single user upload effectively nullified a multi-billion euro regulatory framework.

🟣 The Status Quo: Theoretical Compliance

Currently, the industry relies on SOTA spatial QIM arrays or standard DWT injections. However, they uniformly fail when confronted with combined geometric and frequency assaults:

- Signal / Telegram / WhatsApp: Aggressive high-frequency suppression wipes out spatial micropatterns.

- Instagram / Snapchat: Sub-pixel aspect ratio cropping physically disconnects the spatial block matrix, causing the extractor to read pure entropy.

Before this week, there was no commercially available extraction protocol capable of guaranteeing blind recovery under these simultaneous attacks without relying on hallucinated metadata.

🟢 The Solution: Dual-Domain Stabilization (FEAT-422)

Because of our four-month failure to extract the cryptographic payload from the "BS-12" Instagram crop test, we had to step back and re-architect the fundamental physics of the SDC protocol. Our solution dissolves the contradiction between spatial precision and frequency survival.

The new Fortress Dual-Domain SDK does not bet on a single mathematical domain. It executes simultaneous, probabilistically governed operations:

- Domain 1 (Spatial 8x8 Block-Mean): 31x cryptographic scatter redundancy to defeat localized noise and spatial occlusions.

- Domain 2 (Wavelet LL4 Subband - 16x16 Blocks): 15x redundancy injected directly into the low-frequency structural bones of the image, surviving aggressive Lanczos interpolation and extreme compression natively.

- Probabilistic Weighted Voting: The blind extractor utilizes a dictionary matching geometry inverse, assigning a 150% confidence weight to the wavelet frequency nodes when destructive scaling is detected.

The result: 0.0000 BER extraction probability under conditions that destroy all other standard implementations.

🟡 The Matrix: Absolute EU Mandate Compliance

With the physical layer stabilized, we mapped the entirety of the DESTILL.ai infrastructure—from the NI-Stack to the AEGIS Intention Field—directly against the core mandates of the European Union. This is the Compliance Blueprint.

| EU Law & Article | The Demand | The DESTILL.ai Solution Architecture |

|---|---|---|

| AI Act - Art. 50 (Transparency) |

Synthetic media must be marked and reliably detectable. | FORTRESS SDC: Survives WhatsApp, Telegram, and 6% organic crops with Dual-Domain DWT/Spatial embedding. |

| AI Act - Art. 9 & 14 (Risk & Oversight) |

High-risk systems require active risk management and human agency. | AEGIS Shield: NPU-split intention routing and Poisoned Matrix Guards dynamically block malicious prompt topologies before LLM execution. |

| AI Act - Art. 12 (Traceability) |

Systems must automatically log events for lifecycle tracking. | POAW Receipts: POAW generates hash-chained, quantum-signed (ML-DSA) logs of all system operations without relying on US cloud providers. |

| GDPR - Art. 5 & 25 (Minimization) |

Data minimalization and privacy by design. | OHM Trust Graph: Edge-first processing; only cryptographic OHM resonance results are synced, eliminating central PII honeypots. |

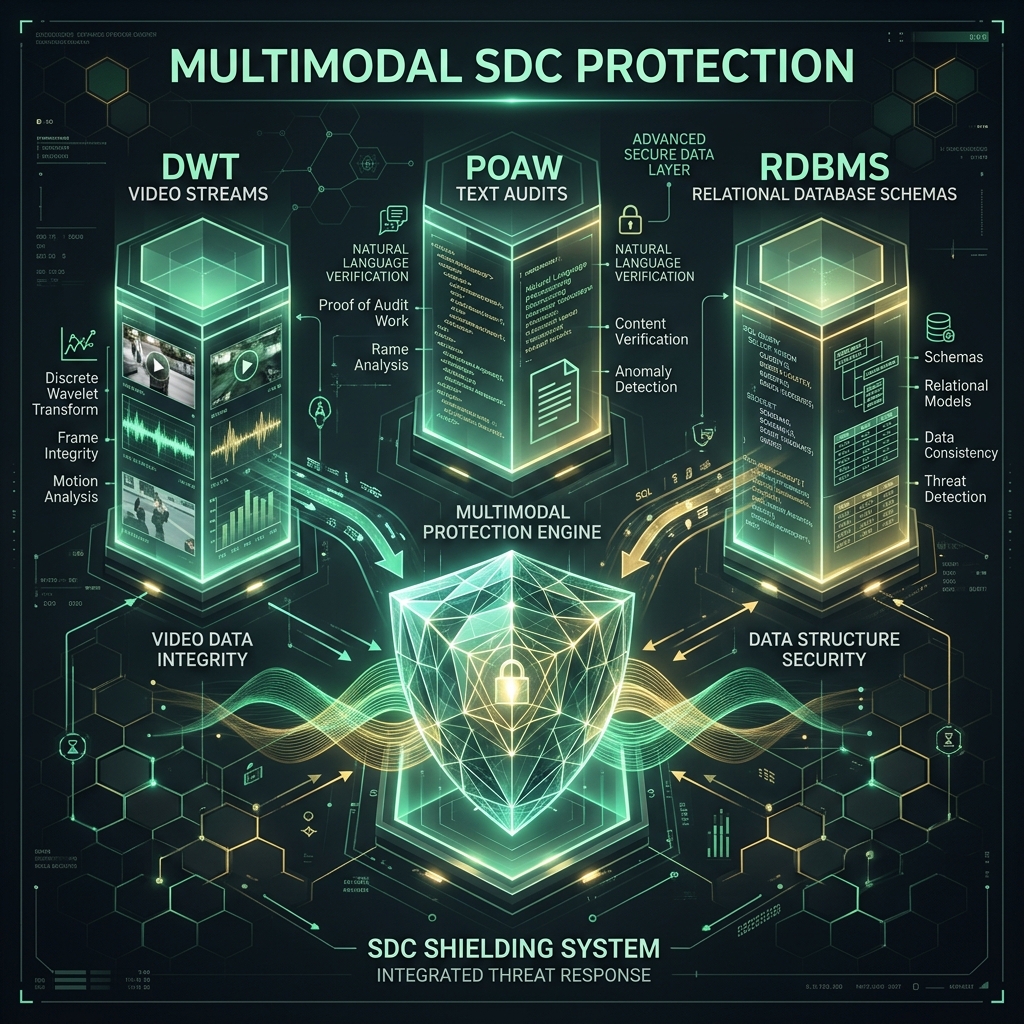

Expanding Beyond Images: Multimodal Defense

The mathematical principles governing Dual-Domain Image embedding have successfully been generalized by DESTILL.ai to protect full multimodal pipelines. The Fortress protocol now extends beyond static pixels to encompass raw structural databases (RDBMS relational schema logic via fingerprinting) and continuous DWT-stream WebRTC video infrastructures, effectively blanketing the enterprise ecosystem in a layer of traceable, legal accountability.

The Final Wedge for the CISO / CTO

If your enterprise is deploying AI inside the European Union, theoretical compliance isn't going to survive an audit any more than a standard watermark survives an Instagram crop. The burden of proof for deepfake provenance, data minimization, and intention safety rests entirely on your infrastructure.

By integrating the DESTILL.ai Unified NI-Stack, you are not buying an AI wrapper. You are deploying physics-based, cryptographically hardened architectural defense systems mathematically guaranteed to meet the demands of the AI Act, the DSA, and the GDPR.